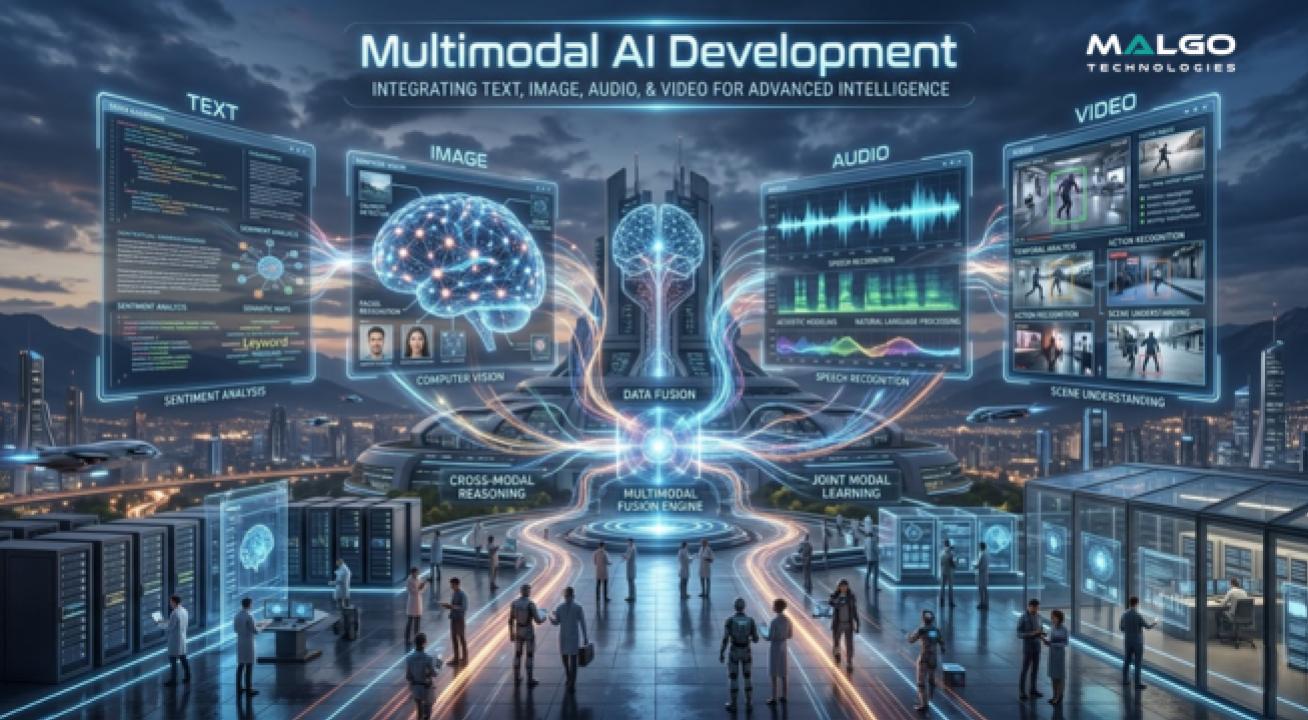

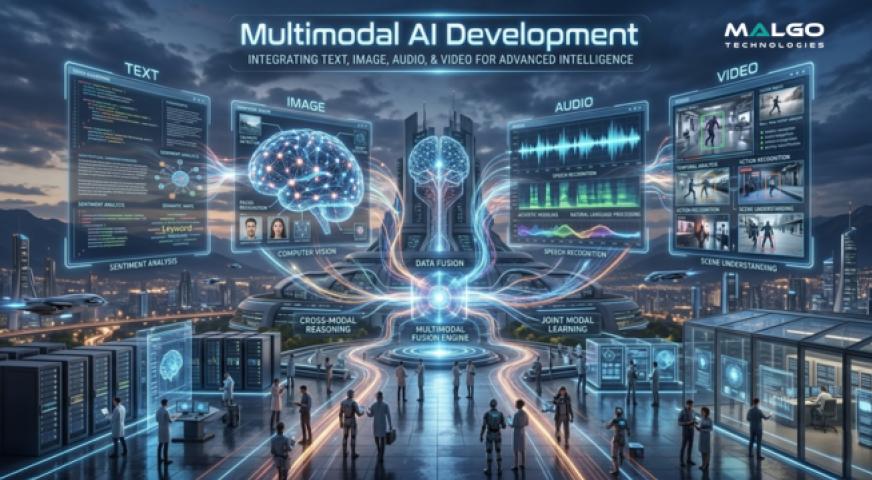

Multimodal AI is a type of artificial intelligence that can process and understand different kinds of data like text, images, and audio at the same time to make better decisions. Instead of looking at just one type of information, these systems combine various inputs to mimic how humans perceive the world. This technology helps computers grasp the full context of a situation rather than seeing data in isolated pieces.

What is Multimodal AI Development?

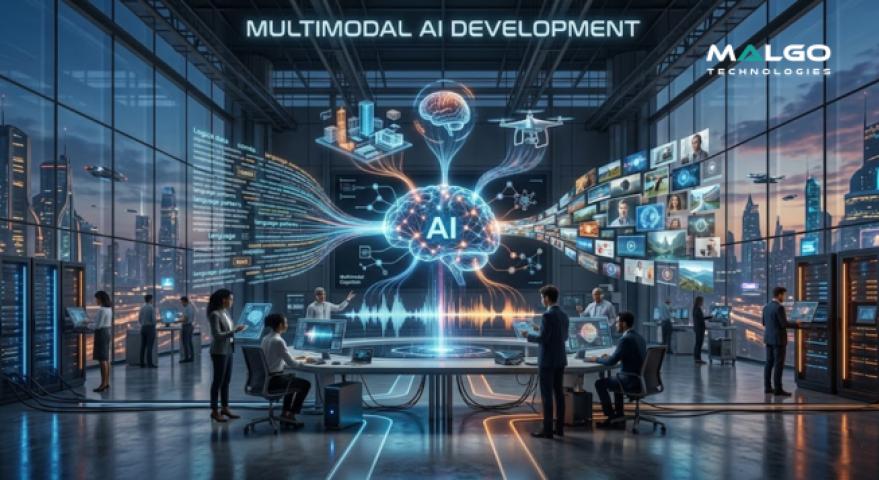

Multimodal AI development involves building computer systems that can handle multiple streams of information at once. In the past, most AI models were built to do just one thing, such as reading text or identifying an object in a photo. Now, developers create systems that can see an image and hear a voice description to understand exactly what is happening in a scene.

These systems use specific math and logic to find connections between different data types. For example, the AI learns that the word "dog" in a text file describes the four-legged animal in a video. This combined way of learning makes the technology much more helpful for tasks that require a deep grasp of human life.

Why Businesses Need Multimodal AI Development Services

The demand for multimodal AI development services is growing because companies have massive amounts of mixed data that stay unused. A business might have recorded customer calls, emails, and store videos that are too hard for people to sort through manually. Using a system that can analyze all these pieces at once helps find patterns that were hidden before.

Another reason for this growth is the need for more natural ways for people to use technology. Customers want to show a picture of a problem and talk about it rather than typing a long form. Multimodal systems make these smooth interactions possible, which keeps customers happy and saves time for support teams.

Features of Multimodal AI Development Solutions

One primary feature of multimodal AI development solutions is the ability to perform cross-modal retrieval. This means a user can find a specific part of a video by just typing a description of what they are looking for. The system understands the action and the sound in the video rather than just looking at the file label.

Another key feature is real-time processing of different sensory inputs to provide instant feedback. This is useful for things like smart security systems that need to see a person and hear an alarm at the same moment. The technology ensures that all data points are synced to avoid errors in judgment.

Benefits of Multimodal AI Development Systems

Using these systems allows companies to gain a more complete view of their operations and customer needs. By analyzing social media posts that include both captions and photos, a brand can understand the true mood of its audience. This leads to better choices and more accurate guesses about what people will want to buy in the future.

Efficiency is another major benefit since one model can do the work that used to require many different programs. This reduces the amount of code to manage and simplifies the technical setup for any business. It also makes the final product much faster and more responsive for the person using it.

Why Choose Malgo for Multimodal AI Development

Malgo focuses on building systems that are easy to use and solve specific business problems. The approach taken here involves looking at the unique data a company has and creating a custom plan to make that data work harder. Malgo prioritizes clear logic so that the new technology fits into the way a business already works.

The team at Malgo stays updated on the latest shifts in machine learning to provide modern solutions. Each project gets individual attention to ensure the AI understands the specific language or visual cues of a particular industry. This dedication helps in creating tools that are reliable and produce consistent results.

Industry Applications for Multimodal AI

In the medical field, this technology helps doctors by looking at X-rays while also reading a patient’s written history. Combining these two different data types leads to a faster and more accurate diagnosis. It acts as an extra set of eyes that can spot patterns a human might miss when looking at separate files.

In the retail sector, multimodal systems improve the shopping experience by allowing customers to search for products using photos. A shopper can take a picture of a shirt they like, and the AI will find the exact item or similar ones in the store’s inventory. This bridge between the physical and digital worlds makes buying things much simpler.