As we look to the future, Seedance Next showcases our cutting edge in research and development. In this experimental playground, we push the boundaries of what is possible with AI video. The "Co-Pilot" experience, where the AI actively collaborates with the author to provide cinematic perspectives and emotional signals rather than just following directions, is the main focus of Seedance Next.

Comprehending Semantic Scenes

Seedance Next is built on a revolutionary "Semantic Understanding" layer. This makes it possible for the AI to understand the story of the question. When you compose a scene about "betrayal," the AI suggests lighting setups and camera angles that evoke that specific emotion. AI is now a creative aid rather than just a rendering engine.

The Roadmap for Real-Time Generation

One of the challenging goals of the Seedance Next research lab is the pursuit of real-time interactivity. We are developing structures that could eventually allow creators to "play" their videos, changing the action as necessary, much like in a video game. Live broadcasting and participatory storytelling would be forever changed by this.

FAQ: Analysing the Frontier

Can I currently utilise Seedance Next's features?

A few experimental features are made accessible to our community for beta testing prior to the release of the stable Pro and 2.0 editions.

What is "Emotional Lighting"?

We are developing a function that automatically adjusts the colour grading to match the tone of the story.

Will Seedance Next support interactive videos?

That's the goal. The foundation for "branching" AI narratives is being laid.

How can I participate in the stage of research and development?

Active members of our professional community are regularly invited to participate in the "Seedance Next" beta programs.

Does it use 3D?

In fact, we are testing methods that facilitate seamless data transfer between 3D applications and the Seedance engine.

Seedance Next: An Examination at the Future of AI-Human Collaboration

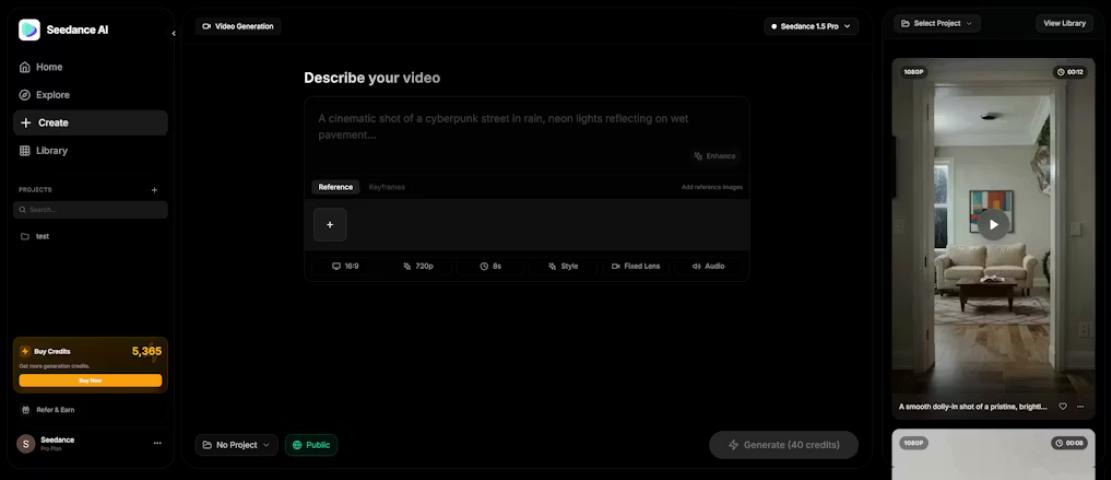

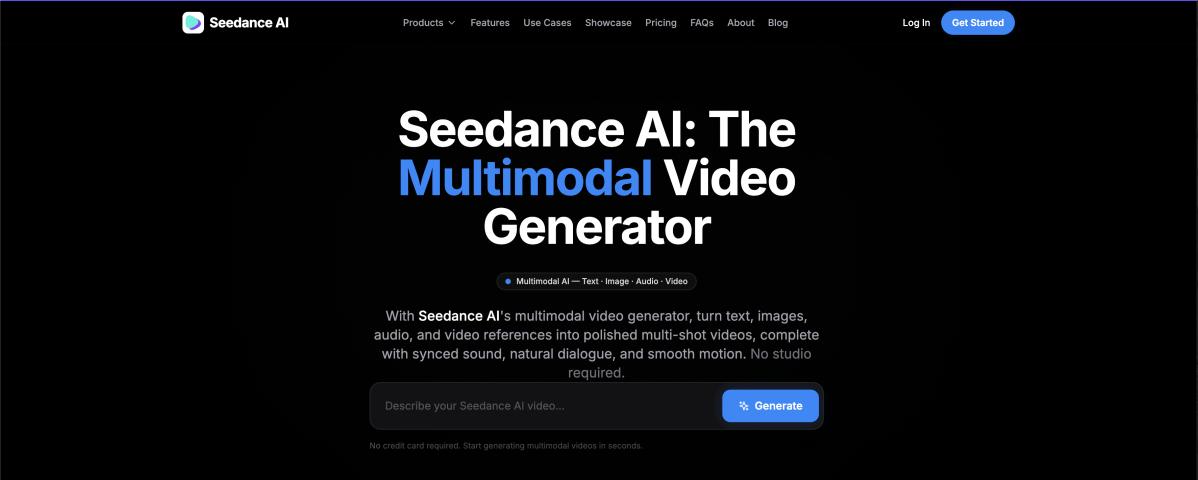

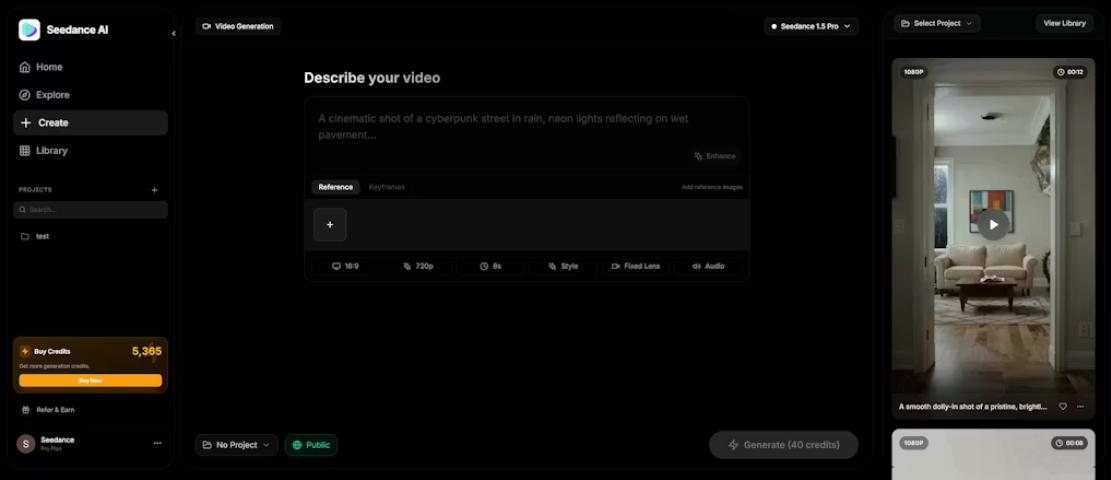

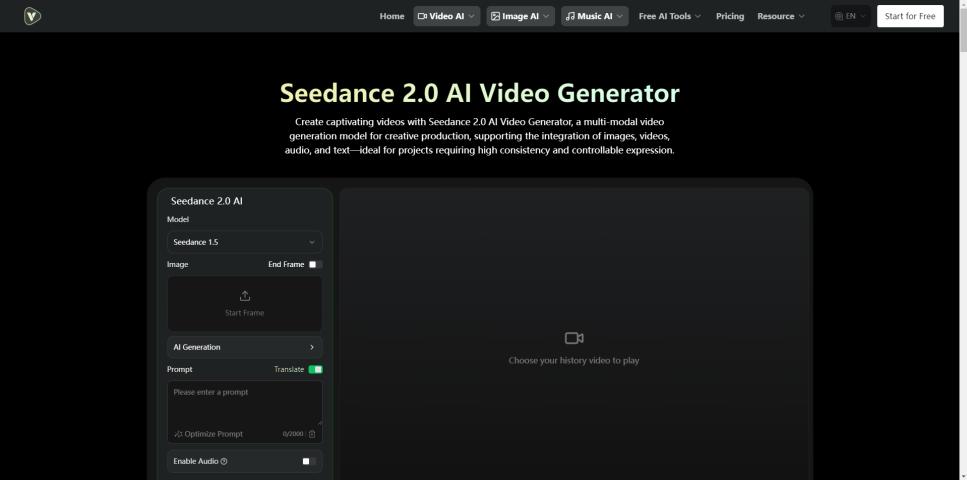

Image credits: seedance

Written by

help.seedanceai

10 hours ago

Related articles:

Identity Lock: How Seedance 2.0 Addressed the Issue of AI Consistency

AI filmmakers' main grievance is the "Face Shift." A character's visage would either slightly or significantly alter from frame to frame in previous iterations of generative video. The ground-breaking "Identity..

Seedance 2.0 vs. The World: Why Creators Are Moving to It

In the crowded field of generative AI, a lot of names often make the news. But professional creators who need reliable service every day and fast rendering are moving more..

Seedance 2.0: The Best Way to Make AI Videos All the Time

The world of making digital content has changed from still images to moving video. But for a long time, the main problem for creators has been that time is not..

The Roadmap to Seedance 3.0: Breaking the Final Barriers of AI Video

If you think AI video has peaked, you aren't looking at the right roadmap. While the world is just getting used to the stability of Seedance 2.0, the foundation for..

The Hybrid Filmmaker’s Toolkit: Combining Seedance 2.0 and Veo AI for Cinematic Perfection

Independent filmmaking is undergoing a massive disruption. In the past, a cinematic shot required a crew, lighting, and high-end cameras. Today, it requires a strategic workflow between specialized AI models...

The Evolution of AI Video: Why Seedance 2.0 is the New Industry Standard

The world of generative video is moving fast, but there’s a persistent problem every creator faces: Consistency. While tools like Sora and Kling have made headlines, professional creators are shifting..

The Ultimate Guide to Next-Gen AI Video: Exploring Seedance AI and the Veo AI Revolution

The landscape of content creation is shifting. No longer is high-quality cinematography limited to those with expensive cameras and massive studios. Today, a new generation of tools is leading the..

Seedance 2.0: The Future of High-Resolution AI Video is Here

The world of artificial intelligence has just taken a massive leap forward with the release of Seedance 2.0. If you thought the original Seedance AI was impressive, the 2.0 version..

Seedance AI: The New Era of Consistent and Flicker-Free AI Video Generation

In the rapidly evolving world of generative AI, the biggest challenge has always been consistency. Most AI video tools today suffer from "flickering" or distorted human anatomy during movement. Enter..

The Solo Studio Handbook: How to Use Seedance 2.0 to Make More Videos

The job of the "Director" is changing. In 2026, a director's job often includes using a set of powerful AI tools to make a vision come to life. seedance官网 2.0..

Identity Lock: How Seedance 2.0 Addressed the Issue of AI Consistency

AI filmmakers' main grievance is the "Face Shift." A character's visage would either slightly or significantly alter from frame to frame in previous iterations of generative video. The ground-breaking "Identity..

Seedance 2.0: The Best Way to Make AI Videos All the Time

The world of making digital content has changed from still images to moving video. But for a long time, the main problem for creators has been that time is not..

The Evolution of AI Video: Why Seedance 2.0 is the New Industry Standard

The world of generative video is moving fast, but there’s a persistent problem every creator faces: Consistency. While tools like Sora and Kling have made headlines, professional creators are shifting..

Seedance 2.0: The Future of High-Resolution AI Video is Here

The world of artificial intelligence has just taken a massive leap forward with the release of Seedance 2.0. If you thought the original Seedance AI was impressive, the 2.0 version..

Getting Started with Seedance 2.0: Your Go-To Guide for Effortless AI Video Creation

Hey there, if you're diving into the world of AI video tools, Seedance 2.0 on Videoweb AI is a game-changer. It's not just another generator—it's built for folks like marketers,..

The Seedance Ecosystem: A Single Workflow for Today's Creatives

The Seedance Ecosystem is powerful because all of its parts work together. Creators can make a full production pipeline that handles everything from concept art to the final cinematic export..

Seedance 2.0: Breaking the Rules of Time Consistency

The creative community has seen a big change since Seedance 2.0 came out. This version isn't just an update; it's a complete rewrite of the engine that makes videos. From..

A Deep Dive into Seedance AI: The Evolution of Generative Video

The world of AI has changed from still images to moving images that are very clear. Seedance AI is at the forefront of this change. It is an ecosystem built..