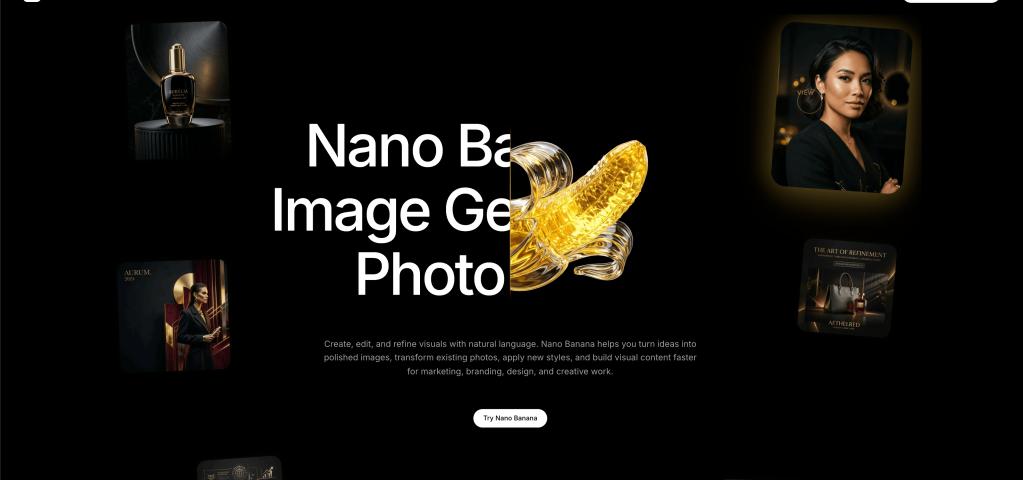

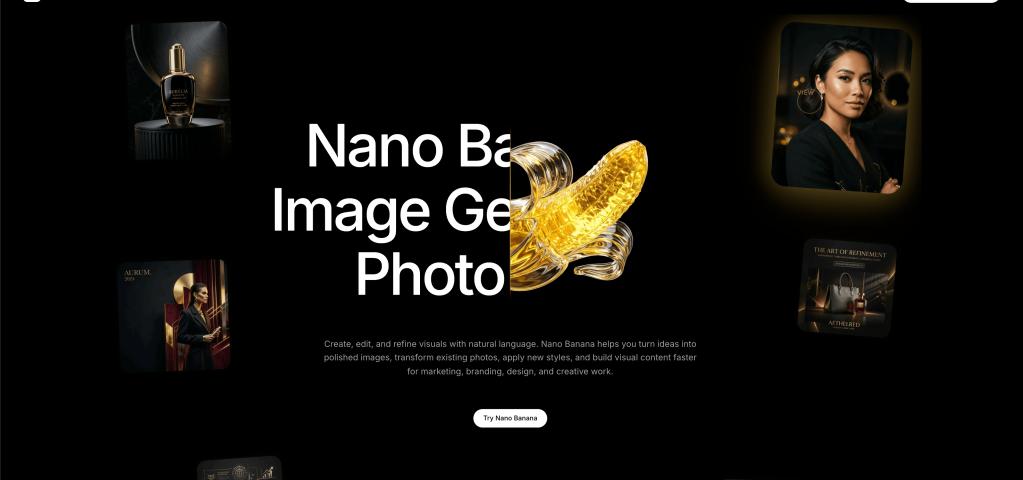

In the fast-paced world of making content and marketing online, speed is just as important as quality. This is why Nano Banana 2 was made. This model is the "Flash" tier of the ecosystem. It makes images almost instantly, so creators can work on hundreds of ideas in the same amount of time it used to take to make one.

Best for Iteration

The process of being creative is rarely a straight line. It requires trying things out, changing your mind, and getting feedback quickly. This "Prototyping Phase" is the best time to use Nano Banana 2. It lets designers see their ideas come to life almost in real time because it gives results in seconds. This makes it easier to go from thought to creation, which is why Nano Banana 2 is such an important tool for brainstorming and making assets quickly.

Keeping up quality at scale

People might think that faster speeds mean lower quality, but Nano Banana 2 goes against this idea. It keeps the ecosystem's main aesthetic strengths while cutting out the heavy computational overhead needed for the "Pro" versions by using a streamlined latent diffusion process. This is the best choice for social media managers, web designers, and anyone else who needs high-quality images quickly.

Questions that are often asked (FAQ)

How much faster is the Nano Banana 2 than the Pro model?

Nano Banana 2 is designed for speed, so it usually gets results 2 to 3 times faster than the Pro tier. This makes it great for real-time apps and tasks with a lot of data.

Does Nano Banana 2 let you use image-to-image prompts?

Yes, the model can take a reference image and make changes to it or change its >

Can Nano Banana 2 be used for mobile workflows?

Nano Banana 2 is very light because of its smart design. This makes it the best choice for developers who want to add high-quality AI generation to mobile apps.

Is it okay to use Nano Banana 2 for professional social media posts?

Sure. Many creators use it to make high-quality graphics for Instagram, TikTok, and the web where speed and >

What makes it so fast to generate?

"Distilled Reasoning" is the secret. It condenses the model's knowledge into a faster, more efficient path without losing the ability to understand complicated prompts.