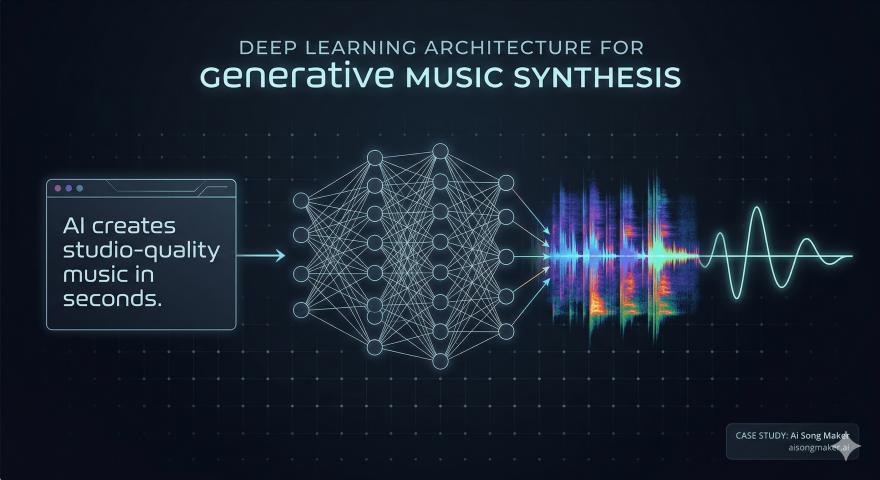

The landscape of music production is undergoing a seismic shift. For decades, creators relied on loops, samples, and manual synthesis. However, the emergence of Riffusion technology has introduced a radical new way to think about sound: generating music through visual data.

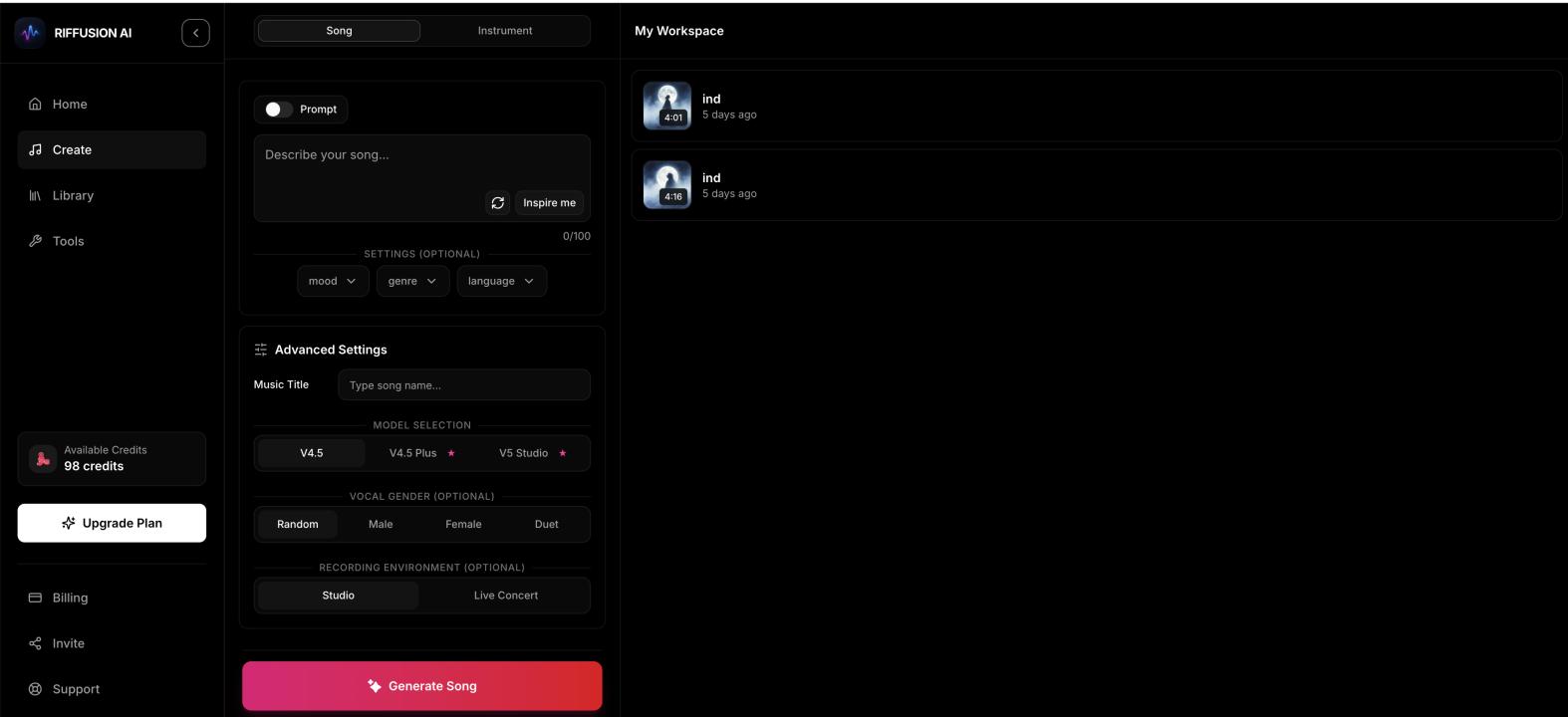

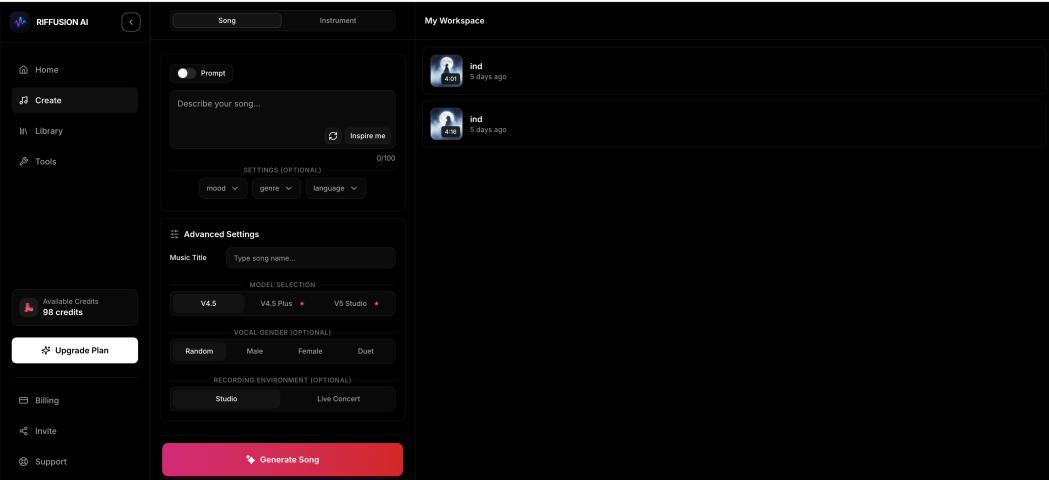

What is Riffusion AI Music?

Unlike traditional AI music models that work solely with MIDI or audio wave patterns,

The Creative Edge for Producers Why are developers and musicians flocking to Riffusion AI Music? The answer lies in its unpredictability and originality.

Unique Text-to-Audio: Simply describe the mood, genre, or instruments, and the model creates a sonic landscape from scratch.

Infinite Variation: Because it generates music visually, no two tracks are ever the same, providing a constant stream of inspiration for producers.

Seamless Experimentation: It’s the perfect playground for those who want to push the boundaries of electronic, lo-fi, and ambient music.

The Future of Royalty-Free Audio Content creators are no longer limited to the same overused stock libraries. With Riffusion AI Music, anyone can generate a signature sound that is uniquely theirs. This is not just a tool; it’s a new instrument for the digital age, democratizing sound design for YouTubers, gamers, and indie artists worldwide.

Experience the Sound of Tomorrow

The fusion of visual AI and audio is here. You can start generating your own experimental tracks today by visiting: