Understanding Emotion Recognition Technology

Emotion recognition software solutions represent one of the most intriguing intersections of artificial intelligence and human psychology. At its core, this technology is designed to analyze human emotional states by interpreting facial expressions, voice patterns, body language, and physiological signals. Instead of making decisions based only on actions or words, emotion recognition systems evaluate subtler indicators of sentiment, mood, and intent. In 2026, these systems are no longer experimental tools confined to labs; they are becoming practical solutions applied across industries from customer experience to healthcare diagnostics.

The human emotional landscape is rich and complex, and traditional data analysis methods struggle to capture implicit feelings and reactions. Emotion recognition software bridges this gap by using machine learning algorithms trained on massive datasets to detect patterns in human behavior. By converting emotional signals into actionable insights, these solutions give businesses, researchers, and developers a deeper understanding of what users truly feel — not just what they say.

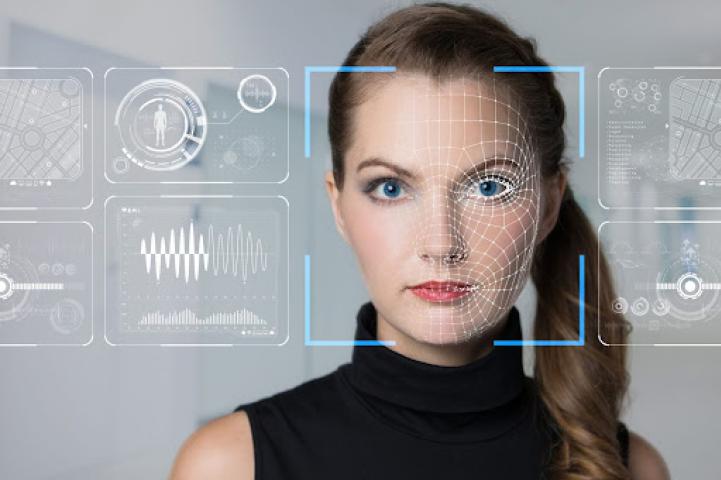

How Emotion Recognition Software Works

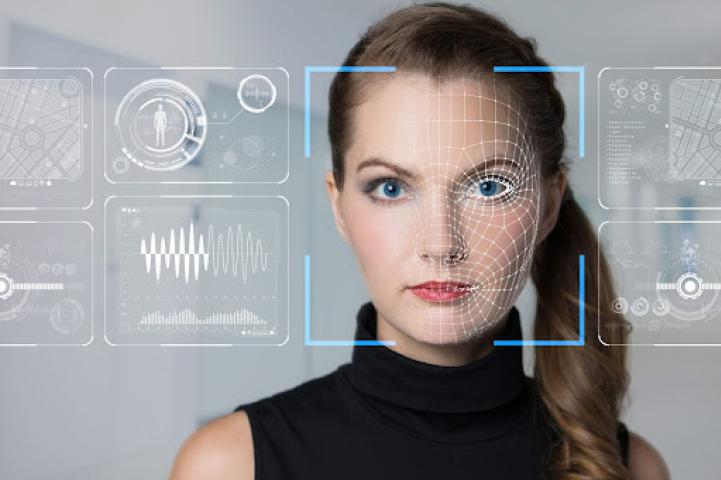

Emotion recognition software solutions operate by combining multiple AI technologies into an integrated system. In facial recognition-based systems, cameras capture micro-expressions — tiny changes in facial muscles that often reveal true emotional states even when people try to hide them. Machine learning models then analyze these patterns, comparing them with established emotion markers to interpret feelings such as happiness, anger, sadness, surprise, or confusion.

Similarly, voice-based emotion recognition evaluates changes in pitch, tone, pace, and volume to interpret underlying sentiment. Human speech carries subconscious indicators of emotional state that traditional speech-to-text systems cannot capture. By analyzing these acoustic features, emotion recognition tools provide a richer layer of understanding.

Physiological-based systems examine biometric signals like heart rate variability or skin conductance to infer emotional arousal. When combined, these methods provide a holistic emotional profile rather than a one-dimensional interpretation.

What makes this approach powerful is not just the capture of isolated emotional cues, but the ability to interpret them in context — comparing emotional responses over time, across situations, and in relation to specific stimuli.

Why Businesses Are Adopting Emotion Recognition Solutions

In an era where customer experience defines competitive advantage, emotion recognition is emerging as a strategic asset. Companies are deploying emotion recognition software to understand how customers feel during interactions with products, services, and digital interfaces. Rather than relying solely on surveys or click-through rates, organizations can now tap into emotional data to refine user experiences, increase engagement, and reduce friction points.

For example, customer service environments are using emotion recognition to improve support quality. When an AI-enabled call or video agent detects frustration or confusion, it can escalate the interaction to a human representative, adjust its responses, or offer calming language. This capability enhances customer satisfaction and decreases churn.

In retail, emotion recognition helps brands interpret consumer reactions to displays, advertisements, and product placements in real time. Instead of guessing what attracts or deters attention, businesses can make data-driven decisions that align with emotional responses.

Even in internal operations, organizations use emotion recognition solutions to better understand employee well-being. Insights into stress levels or engagement patterns enable proactive interventions that improve workplace culture and productivity.

Enhancing Healthcare With Emotional Intelligence

Emotion recognition software solutions are also making waves in healthcare, particularly in fields where understanding emotional and cognitive states can improve outcomes. Mental health professionals are using these systems to monitor patient response patterns, track progress, and tailor therapeutic interventions based on emotional trends over time.

In neurology and psychiatry, emotion recognition technology provides objective data that complements subjective reporting. For patients with conditions like autism spectrum disorder or PTSD, traditional assessments may not fully capture emotional shifts. AI-powered systems help clinicians identify subtle changes that might otherwise go unnoticed.

Telemedicine platforms are integrating emotion analytics to enhance virtual consultations. When doctors can assess not just what a patient says but how they express it emotionally, diagnosis accuracy and patient rapport improve significantly.

Emotion recognition’s ability to provide continuous emotional data opens new possibilities for personalized care plans, early detection of emotional distress, and more empathetic patient support.

AI Ethics and Bias in Emotion Recognition

Despite the promise of emotion recognition software solutions, ethical considerations are at the forefront of industry conversations. Human emotions are deeply personal, and the idea of machines interpreting them raises questions about consent, privacy, and accuracy. Emotion data can be sensitive, and misuse could lead to exploitation or discrimination.

One major concern involves bias in training datasets. If AI models are trained primarily on data representing specific demographic groups, they may misinterpret emotions in individuals from underrepresented populations. This can lead to inaccurate readings, unfair treatment, or misinformed business decisions.

Transparent data governance and inclusive model training are essential for ethical deployment. Organizations must ensure that their emotion recognition systems respect user privacy, adhere to consent protocols, and maintain high standards of accuracy across diverse populations.

Regulatory frameworks are beginning to emerge to address these concerns, emphasizing accountability and user rights. Forward-thinking businesses that adopt ethical AI practices not only mitigate risk but also build trust with customers and stakeholders.

Applications of Emotion Recognition in Education

Emotion recognition software solutions are finding valuable applications in educational settings. Educators are increasingly aware that student engagement, comprehension, and emotional well-being directly impact learning outcomes. AI-driven emotion detection provides insights into how students respond to lessons, identify moments of confusion, and maintain attention.

Online learning platforms are integrating emotion analytics to adapt content delivery in real time. If a system detects signs of frustration or distraction, it can adjust the pace of instruction, introduce interactive elements, or alert instructors to intervene.

In classroom environments, educators can use aggregated emotional data to refine teaching approaches, improve curriculum design, and support student mental health.

This application of emotion recognition is particularly useful in hybrid and remote learning models, where traditional cues like body language are harder for instructors to interpret. By enhancing visibility into student engagement, emotion recognition solutions contribute to more empathetic and effective education systems.

Emotion Recognition in Entertainment and Media

The entertainment industry has also embraced emotion recognition software as a tool for creativity and audience understanding. Media producers use emotional analytics to gauge audience responses to movies, trailers, and advertisements. This insight goes beyond traditional metrics like view counts or ratings — it reveals genuine emotional engagement.

Streaming platforms are experimenting with emotion-informed content recommendations, curating experiences that resonate emotionally with users based on past reactions. Content creators use these insights to enhance storytelling, tailor pacing, and optimize emotional impact.

Live events and gaming platforms also benefit from emotion recognition. In interactive games, systems can adjust difficulty levels, narrative paths, or in-game experiences based on player emotions in real time. Event organizers use emotional analytics to measure crowd sentiment, enhancing overall experience design.

Emotion recognition, in this context, becomes part of a feedback loop that connects creators and audiences more deeply than ever before.

Security and Law Enforcement: A Controversial Frontier

Emotion recognition software solutions are increasingly discussed in the context of security and law enforcement. Agencies explore the potential of real-time emotion detection systems for threat assessment, crowd monitoring, and behavioral analysis. The idea is to identify individuals who display signs of stress or agitation in sensitive environments such as airports, concerts, or public gatherings.

While the goal is public safety, this application raises complex ethical questions. Critics argue that interpreting emotions in high-stakes scenarios risks false positives, invasion of privacy, and misuse of sensitive data. The line between protective surveillance and intrusive monitoring is thin. As a result, governments and advocacy groups are debating guidelines and restrictions to ensure that such systems are deployed responsibly, if at all.

The future of emotion recognition in security settings will depend on robust legal frameworks, transparency, and public discourse to balance safety with civil liberties.

Integrating Emotion Recognition With Other AI Technologies

Emotion recognition software solutions are most powerful when integrated with other AI technologies. For example, combining emotion analytics with natural language processing (NLP) allows systems to understand not just what is being said but how it is being expressed emotionally. This fusion expands the depth of insights in customer support, communication tools, and sentiment analysis.

Integrating emotion recognition with predictive analytics helps businesses anticipate customer reactions before they happen. By analyzing historical emotional data and real-time interactions, companies can tailor experiences that feel more personal and intuitive.

When connected to conversational AI, emotion-informed chatbots and virtual assistants can adjust tone, response >

The synergy between emotion recognition and other AI domains enhances the sophistication and impact of intelligent systems.

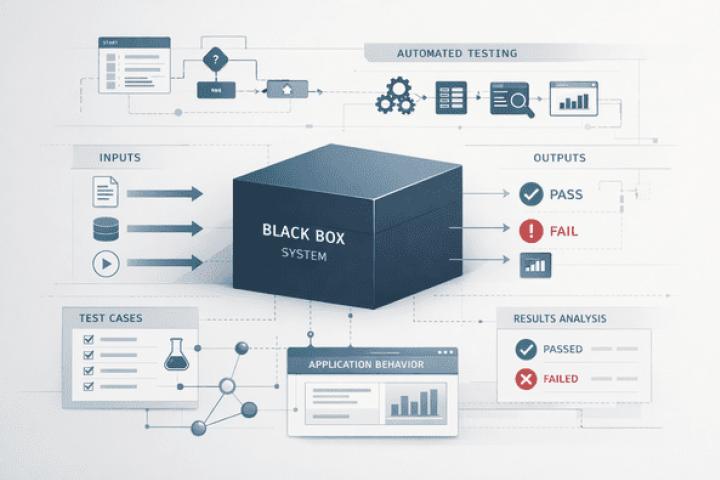

Challenges in Implementing Emotion Recognition Solutions

Despite the growing interest and practical applications, organizations often face challenges when adopting emotion recognition software solutions. One major challenge is data collection. High-quality emotional data requires careful setup, diverse datasets, and consent from users. Gathering meaningful emotional signals without being intrusive demands thoughtful design.

Another challenge is technical complexity. Emotion recognition models must be fine-tuned continuously to reduce errors and maintain relevance across different environments. Real-world conditions such as lighting changes, background noise, cultural differences, and individual variability complicate accuracy.

Security and compliance also present hurdles. Organizations must establish strong protocols to protect sensitive emotion data and comply with data protection regulations.

Addressing these challenges requires cross-functional collaboration between technical teams, legal experts, ethicists, and business leaders to ensure responsible deployment.

The Future of Emotion Recognition Software Solutions

Looking ahead, emotion recognition is likely to become more refined, personalized, and embedded into everyday technologies. Advances in affective computing — the broader field focused on understanding emotion through machines — are driving innovations that improve contextual understanding, reduce bias, and enhance accuracy.

Emotion recognition may evolve beyond visual and vocal signals to incorporate brain-computer interfaces, wearable sensors, and biometric feedback in ways that respect privacy and ethical boundaries. These developments could transform mental health monitoring, immersive experiences, adaptive learning systems, and human-machine collaboration.

As the technology matures, its influence may extend to previously unimaginable applications, fundamentally changing how humans interact with machines and each other.

Conclusion: Emotion Recognition in Business and Society

Emotion recognition software solutions represent a profound shift in how artificial intelligence understands human experience. By interpreting emotional signals, these systems provide insights that go beyond traditional analytics, enabling deeper empathy in customer interactions, improved healthcare outcomes, enriched educational experiences, and more engaging entertainment.

However, the adoption of emotion recognition technology also comes with ethical, privacy, and implementation challenges that cannot be ignored. Organizations must approach deployment thoughtfully, prioritizing transparency, fairness, and respect for users.

In 2026, emotion recognition stands at the cusp of becoming a transformative tool — not simply for technology leaders but for any organization that seeks to understand people more meaningfully. With responsible development and forward-thinking strategy, emotion recognition software solutions will continue shaping the future of human-centered innovation.